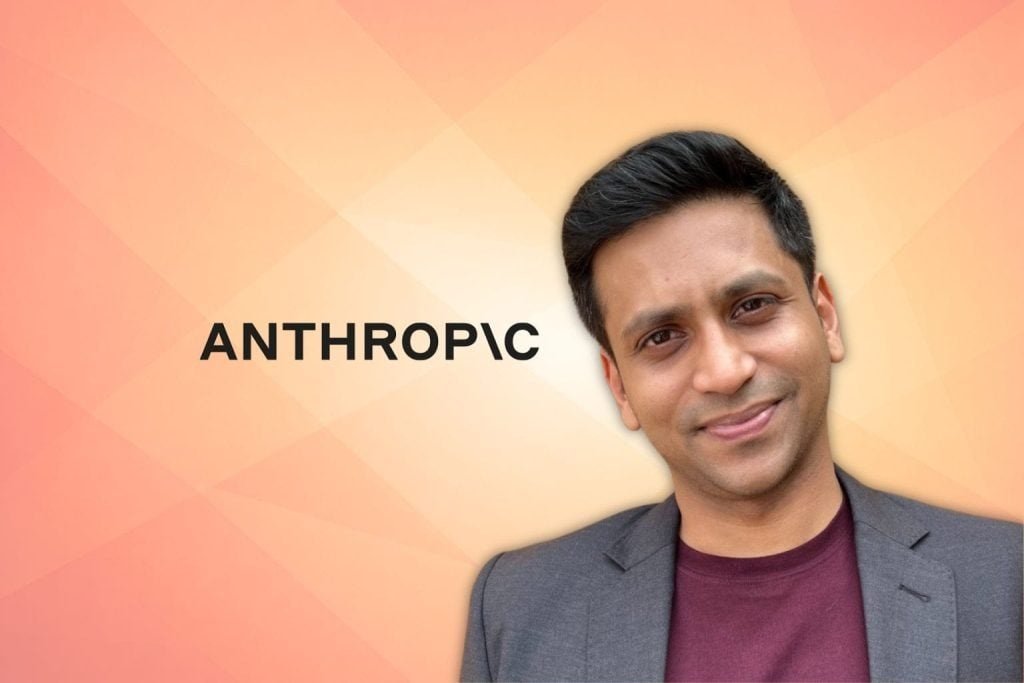

New Delhi | April 10, 2026 — Anthropic, the AI safety and research company behind the Claude LLM, has officially entered the Indian regulatory landscape by appointing Amlan Mohanty as its Head of Policy – India. The move signals Anthropic’s commitment to engaging with one of the world’s fastest-growing AI markets.

In his new role, Mohanty will lead the company’s efforts to build strategic partnerships with the Indian government, industry leaders, and civil society, ensuring that the deployment of frontier AI models aligns with national priorities and safety standards.

A Safety-First Leadership

Mohanty joins Anthropic with a robust background in AI governance. He previously served as an Associate Fellow at the Centre for Responsible AI (CeRAI), where he specialized in research papers and advisory roles concerning AI safety and ethical governance.

Sharing his vision on LinkedIn, Mohanty highlighted Anthropic’s “mission-driven” culture and its “safety-first approach” as key reasons for joining.

“Two weeks inside a frontier AI lab has left me feeling more convinced about the power of this technology to radically improve our lives,” Mohanty stated. “Equally, we need to be mindful of the risks and act thoughtfully.”

Strategic Context

The appointment comes at a time when the Indian government is actively drafting regulatory frameworks for artificial intelligence. By bringing in a seasoned policy expert like Mohanty, Anthropic aims to:

-

Foster Trust: Build long-term relationships with Indian regulators to navigate emerging AI laws.

-

Support Innovation: Ensure Anthropic’s products are accessible to Indian developers and businesses in a secure manner.

-

Global Alignment: Bridge the gap between global AI safety research and India’s unique socio-economic landscape.

Mohanty’s extensive writing on AI risks and governance is expected to play a vital role in how Anthropic positions its “Constitutional AI” framework within the Indian ecosystem.